Exploring Modern Test Automation and Architectural Standards Through Insider AI Driven QA Bootcamp 2026

In the Software Testing Life Cycle (STLC), Quality Assurance (QA) is no longer just about verifying that "the code works without errors." We are in an era where QA is evolving into AI-driven, smarter, easily maintainable, and scalable systems. Recently, I had the opportunity to lay the foundations of this transformation by attending the first session of the Insider AI Driven QA Bootcamp 2026.

In this article, I want to share the critical lessons I learned regarding Test Automation Fundamentals, the Page Object Model (POM) approach, coding standards, and Visual Testing an indispensable part of modern QA.

1. Why Test Automation Matters and the Scale of "Atlas"

Test automation is essentially the process of automating manual test procedures using software tools. By integrating tests into CI/CD pipelines, tests run automatically after every commit, allowing regression errors to be caught early and increasing release safety. This minimizes human error and makes test coverage highly scalable.One of the most eye-opening parts of the bootcamp was seeing how these theoretical benefits are managed on a massive scale. Insider tests all of its product channels (Web Push, Email, SMS, WhatsApp, and Architect) using a unified framework called "Atlas". To understand the scale: Atlas coordinates over 8,500 test files, 2,000+ Page Objects, and 500+ Visual Tests utilizing more than 2,600 visual snapshots, all under one roof.

2. Sustainable Architecture: Page Object Model (POM)

The heart of scalable test automation lies in the right architecture. We took a deep dive into the Page Object Model (POM), a design pattern that models every UI page as a Python class.The fundamental rules and advantages of this approach include:

- Centralized Management: Locators are defined as

UPPERCASEtuples at the class level, separate from the test code. Thanks to POM, if a locator changes, you only need to update the relevant page object, rather than modifying all the tests. - Mandatory Page Checks: Every page must inherit from

PageBaseand include acheck()method that verifies the page has successfully loaded. - Fluent Chaining: Methods return the next page object, making test writing as fluent and readable as reading a story.

The Atlas framework is structurally highly modular, consisting of directories such as base/ (core framework, helpers, DB controllers), pages/ (the POM layer with 51 modules), tests/API/ (backend and flow tests), tests/Panel/ (UI panel tests), and tests/Gandalf/ for visual regression tests.

3. Coding Standards and "Wait" Strategies

High-quality test software remains maintainable only when strictly adhering to coding standards. The bootcamp strongly emphasized Python (PEP 8) and Selenium best practices:

- Naming Conventions: Class names should be written in

PascalCase, method, variable, and file names insnake_case, and constants inUPPER_SNAKE_CASE. - Independence and Responsibility: Every test must run independently and should not rely on a specific execution sequence. Following the "Single Responsibility" principle, each test must verify only one behavior, and its name should clearly explain what it is testing.

- Meaningful Assertions: When an assertion fails, the error message provided by the system must offer meaningful information about the failure situation.

When it comes to Wait Strategies—one of automation's biggest pain points—there are golden rules: Using hard-coded waits like time.sleep(5) is a huge mistake. Instead, tests must use explicit waits (e.g., visibility_of_element_located or element_to_be_clickable). Because the check() method already waits for the page to load, double waits must be avoided. Most importantly, if an element is not found, the golden rule dictates that you should fix the page object method, not the test itself. Finally, find_element() does not wait, so an explicit wait must be added beforehand.

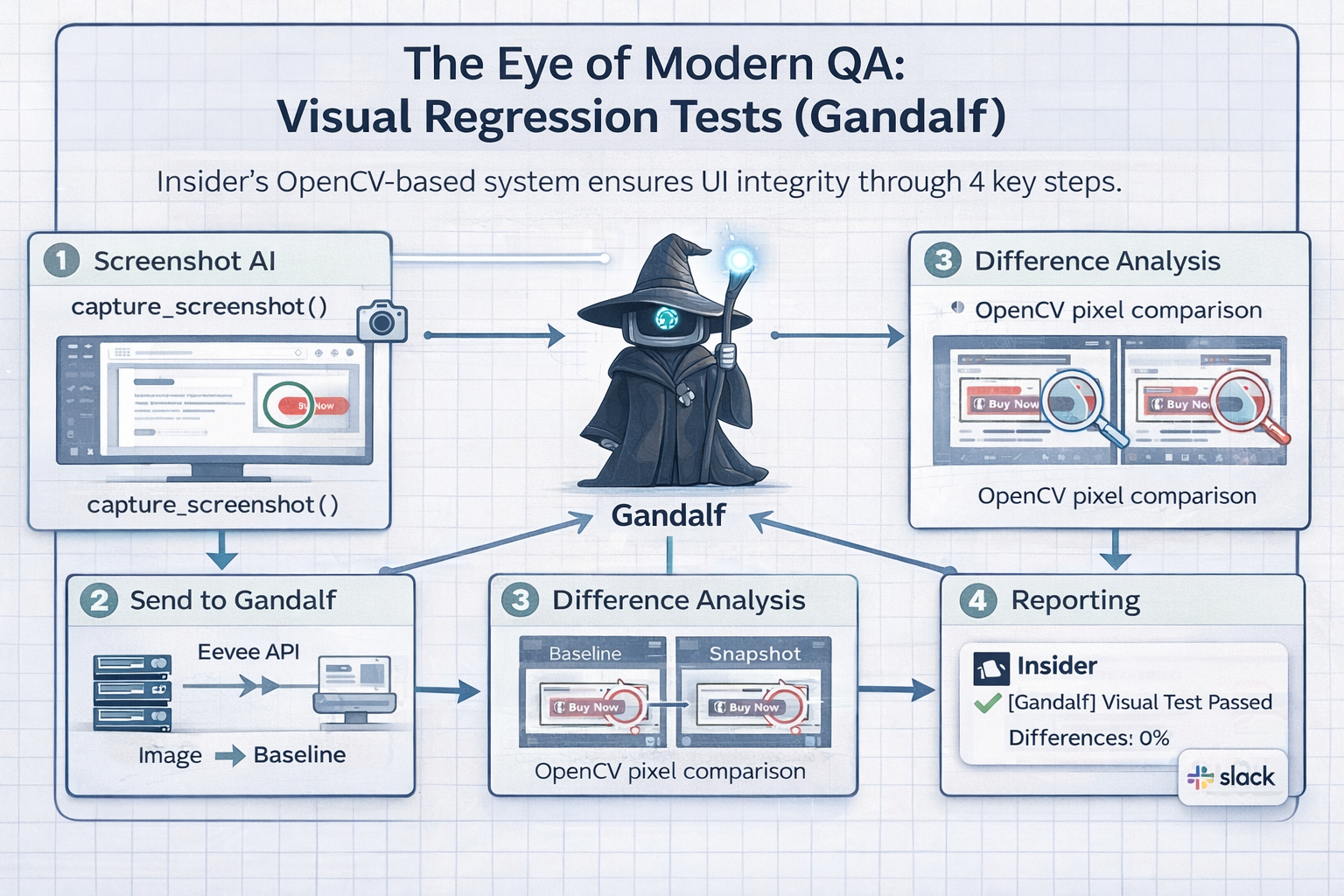

4. The Eye of Modern QA: Visual Regression Tests (Gandalf)

Merely ensuring that elements exist and are clickable isn't enough for the ultimate user experience; button colors, positions, or design shifts are also critical bugs. This is where Gandalf, Insider's OpenCV-based visual regression test system, comes into play.Gandalf automatically detects UI changes. The system ensures UI integrity through 4 simple steps:

- Screenshot AI: A snapshot of the page is captured using

capture_screenshot(). - Send to Gandalf: The image is sent via the Eevee API to be compared against a baseline.

- Difference Analysis: OpenCV pixel comparison generates a diff to spot design anomalies.

- Reporting: Notifications and results are instantly sent to the team via Slack integration.

Closing and Demo Analysis

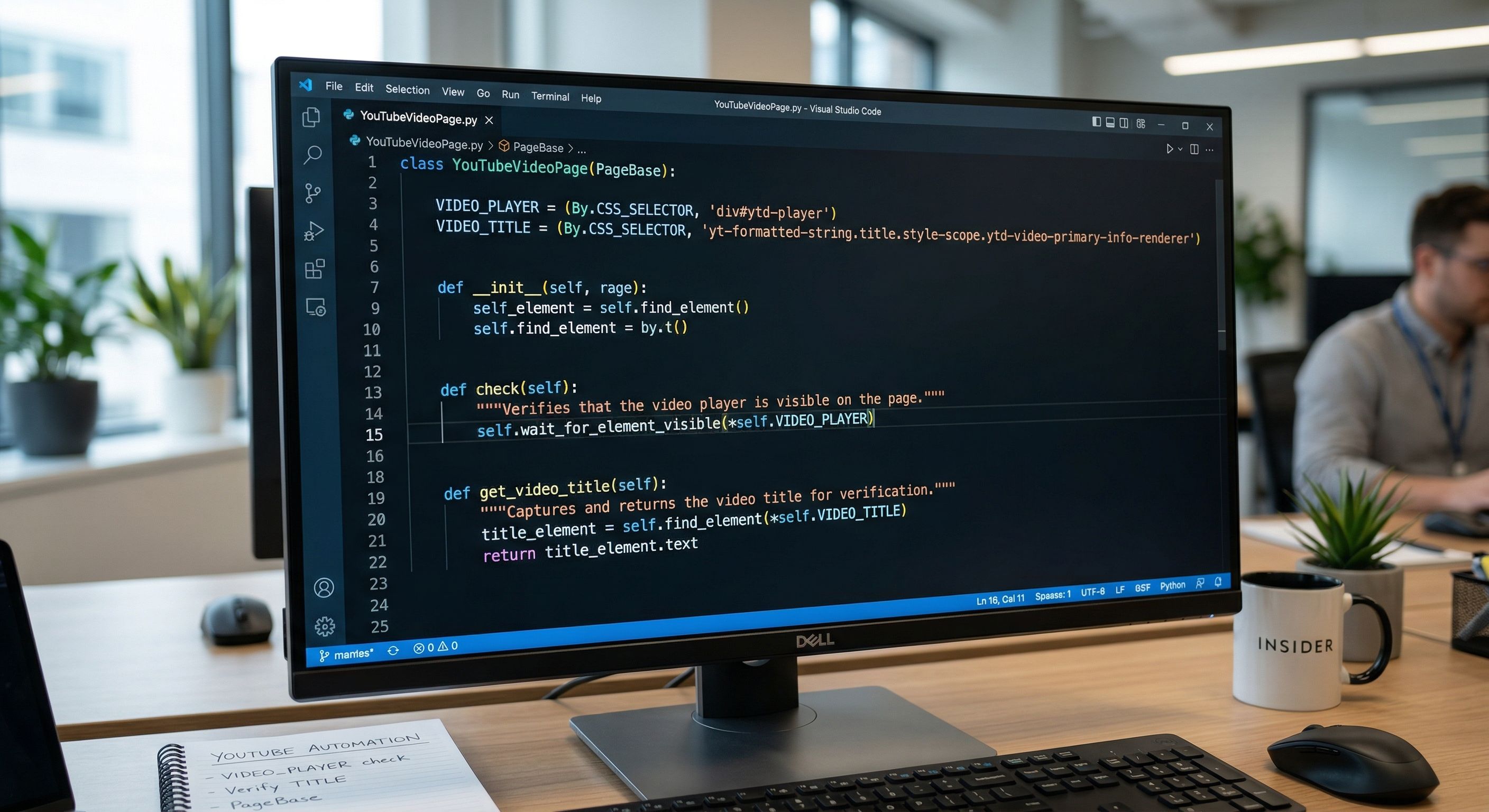

To reinforce the architecture we learned, we ended the session by analyzing a real test automation scenario running on YouTube. The demo consisted of a 3-step flow: Navigate to YouTube, Search for 'Insider test automation', and Click the first video and verify its title.During this step-by-step code review, we analyzed the YouTubeVideoPage class code. We observed:

- How the class inherits from

PageBase. - How locators (

VIDEO_PLAYERandVIDEO_TITLE) are defined usingBy.CSS_SELECTOR. - How the

check()method smartly usesself.wait_for_element_visible(self.VIDEO_PLAYER)to verify that the video page is loaded. - And finally, how the

get_video_title()method dynamically captures the text of the video title (title_element.text) to be used in assertions.

This bootcamp clearly demonstrated that test automation is not just a bunch of scripts; it is backed by strict standards, architectural discipline, and deep strategy.

I‘m extremely excited about the upcoming sessions, which will cover AI-driven test approaches and advanced test infrastructures! Let’s discuss the biggest architectural challenges you face in your test automation processes in the comments.